Meta AI Introduces CoCoMix: A Pretraining Framework Integrating Token Prediction with Continuous Concepts

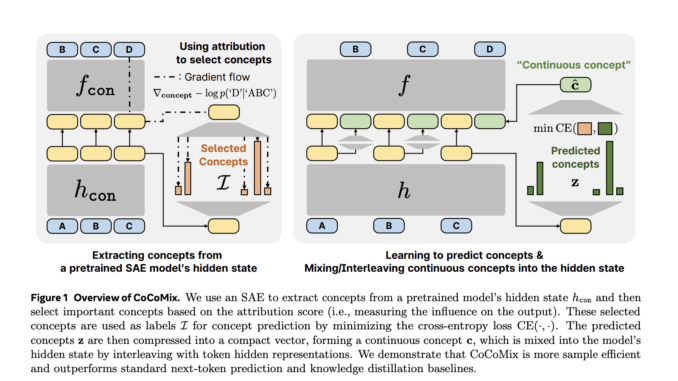

The dominant approach to pretraining large language models (LLMs) relies on next-token prediction, which has proven effective in capturing linguistic patterns. However, this method comes with notable limitations. Language tokens often convey surface-level information, requiring […]